Building a Placement Prediction System: A Beginner’s Guide to Machine Learning in Education

Introduction

Have you ever wondered how training institutes and colleges can predict which students are likely to get placed in companies? In this comprehensive guide, I’ll walk you through building your very own Placement Prediction Dashboard using Machine Learning – perfect for beginners with zero coding experience!

This project is ideal for computer science students looking to add an impressive portfolio piece that demonstrates real-world application of AI/ML concepts.

🎯 What We’re Building

A machine learning system that predicts whether a student will get placed based on their:

- CGPA/Degree percentage

- Number of projects completed

- Internship experience

- Specialization

- Past academic performance

Think of it as a “Placement Probability Calculator” that training institutes can use to identify students who need extra support and guidance.

🛠️ Tech Stack (All Free & Beginner-Friendly!)

- Python – The programming language

- Google Colab – Free cloud notebook (no installation needed!)

- Pandas – For data manipulation (like Excel on steroids)

- Scikit-learn – Machine learning library

- Matplotlib & Seaborn – For beautiful data visualizations

Time Required: 60-90 minutes (including learning)

📚 Understanding the Fundamentals

Before we dive into code, let’s understand key concepts with real-world examples:

What is Machine Learning?

Traditional Programming (Old Way):

IF CGPA > 8.0 AND projects > 2 THEN placed = Yes

IF CGPA < 6.0 THEN placed = NoYou manually write every rule (exhausting and limited!)

Machine Learning (Smart Way):

1. Feed the computer 200+ past student records

2. Computer automatically finds patterns

3. Computer predicts new students' placement chancesThe beauty? The computer discovers patterns you might never notice!

Train vs Test: The Exam Analogy

Imagine preparing students for a final exam:

- Training Phase (80% data): Teacher teaches chapters 1-8, students practice

- Testing Phase (20% data): Final exam covers chapters 9-10 (new material!)

Why test on new chapters? To ensure students truly understood concepts rather than just memorized answers.

Similarly, we:

- Train our model on 172 students (it learns patterns)

- Test on 43 new students (proves it learned correctly)

🚀 Building the Project: Step-by-Step

Step 1: Setting Up Google Colab

- Go to Google Colab

- Sign in with your Google account

- Click New Notebook

- You’re ready to code! (No installation needed)

Step 2: Getting the Dataset

We’ll use the Campus Recruitment Dataset from Kaggle:

- Visit: Campus Recruitment Dataset

- Download

Placement_Data_Full_Class.csv - Upload to Colab (click folder icon → upload button)

Dataset Overview:

- 215 students’ records

- 15 features (CGPA, specialization, work experience, etc.)

- Target variable: Placed or Not Placed

Step 3: Import Libraries

import pandas as pd # Data manipulation

import numpy as np # Mathematical operations

import matplotlib.pyplot as plt # Basic plotting

import seaborn as sns # Advanced visualizations

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder

from sklearn.linear_model import LogisticRegression

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import accuracy_score, classification_reportReal-world analogy: Think of libraries as toolboxes – each contains specific tools for different tasks!

Step 4: Load and Explore Data

# Load the dataset

df = pd.read_csv('Placement_Data_Full_Class.csv')

# See first 5 students

print(df.head())

# Check data info

print(df.info())

# Statistical summary

print(df.describe())What you’ll discover:

- Total students: 215

- Placement rate: ~68% placed, ~32% not placed

- Average degree percentage: 66%

- Key insight: CGPA strongly correlates with placement!

Step 5: Visualize the Data

Charts help us spot patterns instantly:

Placement Distribution (Pie Chart):

df['status'].value_counts().plot(kind='pie', autopct='%1.1f%%')

plt.title('Placement Status Distribution')

plt.show()CGPA vs Placement (Box Plot):

sns.boxplot(x='status', y='degree_p', data=df)

plt.title('Degree Percentage vs Placement')

plt.show()Key Finding: Students with degree percentage > 70% have significantly higher placement rates!

Step 6: Data Preprocessing

Machine learning models only understand numbers, so we need to convert text to numbers:

# Handle missing values

df['salary'].fillna(0, inplace=True) # ❌ Old way (will break in future)

df['salary'] = df['salary'].fillna(0) # ✅ New correct way

# Convert text to numbers

le = LabelEncoder()

df['gender'] = le.fit_transform(df['gender']) # Male=1, Female=0

df['status'] = le.fit_transform(df['status']) # Placed=1, Not Placed=0

df['specialisation'] = le.fit_transform(df['specialisation'])Real-world analogy: Converting “Male/Female” to 1/0 is like giving students ID numbers instead of names for easier processing.

Simple explanation:

- Old way: Modify the column directly (might not work on copies)

- New way: Replace the entire column with the filled version (always works)

Why it’s a problem: Pandas is warning that this method will stop working in future versions. It’s like using an old technique that’s being phased out.

Step 7: Split Data into Features and Target

# Features (X) = Input data (what we know about students)

X = df[['gender', 'ssc_p', 'hsc_p', 'degree_p', 'workex',

'etest_p', 'specialisation', 'mba_p']]

# Target (y) = Output (what we want to predict)

y = df['status']

# Split into train and test sets

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)Result:

- Training: 172 students

- Testing: 43 students

Step 8: Build Machine Learning Models

We’ll create TWO models and compare them:

Model 1: Logistic Regression (Simple & Fast)

# Create and train the model

# Encode the 'workex' column to numerical values

le_workex = LabelEncoder()

X_train['workex'] = le_workex.fit_transform(X_train['workex'])

X_test['workex'] = le_workex.transform(X_test['workex'])

lr_model = LogisticRegression(max_iter=1000) # Increased max_iter to help convergence

lr_model.fit(X_train, y_train)

# Make predictions

lr_pred = lr_model.predict(X_test)

# Check accuracy

lr_accuracy = accuracy_score(y_test, lr_pred)

print(f"Logistic Regression Accuracy: {lr_accuracy * 100:.2f}%")ValueError – “could not convert string to float: ‘No'”

What happened: The workex column contains text (“Yes” and “No”), but machine learning models need numbers only. You can’t do math with words!

# Convert 'workex' column: Yes=1, No=0

le_workex = LabelEncoder()

X_train['workex'] = le_workex.fit_transform(df['workex'])

# Now it looks like: Yes → 1, No → 0 ✅

```

**Before vs After:**

```

Before: workex = ["Yes", "No", "Yes", "No"]

After: workex = [1, 0, 1, 0]Real-world analogy: Imagine trying to calculate: "Yes" + "No" = ? → Computer gets confused! 🤯

Convergence Warning – Logistic Regression

What happened: Logistic Regression model didn’t finish learning properly. It’s like a student who ran out of time during an exam!

Why: Default setting gives the model only 100 attempts to learn. Sometimes it needs more.

# Give the model more time to learn (increase max_iter)

lr_model = LogisticRegression(max_iter=1000) # ✅ 1000 attempts instead of 100

lr_model.fit(X_train, y_train)Simple explanation:

- max_iter=100 (default): Model gets 100 tries to learn → “Time’s up! Not fully learned”

- max_iter=1000: Model gets 1000 tries → “Enough time! Fully learned” ✅

Model 2: Random Forest (More Powerful)

# Create and train the model

rf_model = RandomForestClassifier(n_estimators=100, random_state=42)

rf_model.fit(X_train, y_train)

# Make predictions

rf_pred = rf_model.predict(X_test)

# Check accuracy

rf_accuracy = accuracy_score(y_test, rf_pred)

print(f"Random Forest Accuracy: {rf_accuracy * 100:.2f}%")Typical Results:

- Logistic Regression: ~83% accuracy

- Random Forest: ~86% accuracy

Step 9: Evaluate Model Performance

# Confusion Matrix (shows correct vs incorrect predictions)

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, rf_pred)

# Visualize

sns.heatmap(cm, annot=True, fmt='d', cmap='Blues')

plt.title('Confusion Matrix')

plt.xlabel('Predicted')

plt.ylabel('Actual')

plt.show()

# Detailed report

print(classification_report(y_test, rf_pred))Understanding the Confusion Matrix:

Predicted Not Placed | Predicted Placed

Actual Not Placed: 12 | 2

Actual Placed: 4 | 25- 12 = Correctly predicted “Not Placed”

- 25 = Correctly predicted “Placed”

- 2+4 = 6 = Wrong predictions (model’s mistakes)

Step 10: Feature Importance (What Matters Most?)

# Get feature importance from Random Forest

importances = rf_model.feature_importances_

features = X.columns

# Create visualization

plt.figure(figsize=(10, 6))

plt.barh(features, importances)

plt.xlabel('Importance Score')

plt.title('Which Factors Matter Most for Placement?')

plt.show()Key Insights (Typical findings):

- Degree Percentage (40%) – Most important!

- MBA Percentage (25%)

- Employability Test Score (20%)

- Work Experience (10%)

- Gender, Specialization (5%)

Step 11: User Input Prediction (The Cool Part!)

Now let’s predict for a NEW student:

Option A: Simple Text Input

# New student data

new_student = {

'gender': 1, # 1=Male, 0=Female

'ssc_p': 75.0, # 10th percentage

'hsc_p': 80.0, # 12th percentage

'degree_p': 72.0, # Degree percentage

'workex': 1, # 1=Yes, 0=No

'etest_p': 78.0, # Employability test score

'specialisation': 1, # 1=Mkt&Fin, 0=Mkt&HR

'mba_p': 65.0 # MBA percentage

}

# Convert to format model understands

new_student_df = pd.DataFrame([new_student])

# Predict!

prediction = rf_model.predict(new_student_df)[0]

probability = rf_model.predict_proba(new_student_df)[0]

if prediction == 1:

print(f"🎉 PREDICTION: PLACED!")

print(f"Confidence: {probability[1] * 100:.1f}%")

else:

print(f"⚠️ PREDICTION: NOT PLACED")

print(f"Confidence: {probability[0] * 100:.1f}%")Option B: Interactive Form (Professional Look)

import ipywidgets as widgets

from IPython.display import display

# Create input fields

gender_input = widgets.Dropdown(options=[('Male', 1), ('Female', 0)], description='Gender:')

ssc_input = widgets.FloatSlider(min=40, max=100, value=75, description='10th %:')

hsc_input = widgets.FloatSlider(min=40, max=100, value=75, description='12th %:')

degree_input = widgets.FloatSlider(min=40, max=100, value=70, description='Degree %:')

workex_input = widgets.Dropdown(options=[('Yes', 1), ('No', 0)], description='Work Exp:')

etest_input = widgets.FloatSlider(min=40, max=100, value=70, description='E-Test %:')

spec_input = widgets.Dropdown(options=[('Mkt&Fin', 1), ('Mkt&HR', 0)], description='Specialization:')

mba_input = widgets.FloatSlider(min=40, max=100, value=65, description='MBA %:')

predict_button = widgets.Button(description='Predict Placement')

output = widgets.Output()

def on_predict_click(b):

with output:

output.clear_output()

# Gather inputs - Proper DataFrame with column names

student_data = pd.DataFrame([[

gender_input.value,

ssc_input.value,

hsc_input.value,

degree_input.value,

workex_input.value,

etest_input.value,

spec_input.value,

mba_input.value

]], columns=['gender', 'ssc_p', 'hsc_p', 'degree_p',

'workex', 'etest_p', 'specialisation', 'mba_p']) # ✅ FIXED

# Predict

pred = rf_model.predict(student_data)[0]

prob = rf_model.predict_proba(student_data)[0]

if pred == 1:

print(f"✅ PREDICTION: PLACED! (Confidence: {prob[1]*100:.1f}%)")

else:

print(f"❌ PREDICTION: NOT PLACED (Confidence: {prob[0]*100:.1f}%)")

predict_button.on_click(on_predict_click)

# Display form

display(gender_input, ssc_input, hsc_input, degree_input,

workex_input, etest_input, spec_input, mba_input,

predict_button, output)🎯 Key Takeaways & Insights

After building this project, you’ll discover:

- Degree percentage is the strongest predictor of placement (40% importance)

- Work experience significantly boosts chances (even 1 internship helps!)

- Gender has minimal impact on placement outcomes (fair hiring practices)

- Students with 70%+ consistently get placed (threshold insight for institutes)

- Random Forest outperforms Logistic Regression by 3-5% accuracy

💼 Resume Impact

Add this to your resume as:

PLACEMENT PREDICTION SYSTEM | Python, Scikit-learn, Pandas

* Built ML model predicting student placement with 86% accuracy using Random Forest

* Analyzed 215 student records, identified degree percentage as top predictor (40% importance)

* Created interactive prediction interface for real-time placement probability assessment

* GitHub: [your-link] | Live Demo: [colab-link]🚀 Next Steps & Extensions

Want to take this project further? Try:

- Add more features: Include aptitude test scores, certifications, extracurriculars

- Try deep learning: Implement neural networks using TensorFlow/Keras

- Build a web app: Deploy using Streamlit or Flask

- Real-time data: Connect to live student databases

- Recommendation engine: Suggest courses/skills to improve placement chances

📊 Project Summary

What You Built:

- End-to-end ML pipeline from data loading to prediction

- Two classification models with performance comparison

- Interactive user input system

- Professional data visualizations

Skills Demonstrated:

- Data preprocessing and cleaning

- Exploratory Data Analysis (EDA)

- Machine Learning model training and evaluation

- Feature engineering and importance analysis

- Python programming with ML libraries

Time Investment: 60-90 minutes

Difficulty Level: Beginner-friendly

Resume Value: ⭐⭐⭐⭐⭐

🎓 Conclusion

Congratulations! You’ve just built a real-world machine learning system that training institutes actually need. This project demonstrates your ability to:

✅ Work with real datasets

✅ Build and compare ML models

✅ Create actionable insights from data

✅ Build user-facing prediction systems

Most importantly, you’ve learned that AI/ML isn’t magic – it’s patterns in data, automated and scaled.

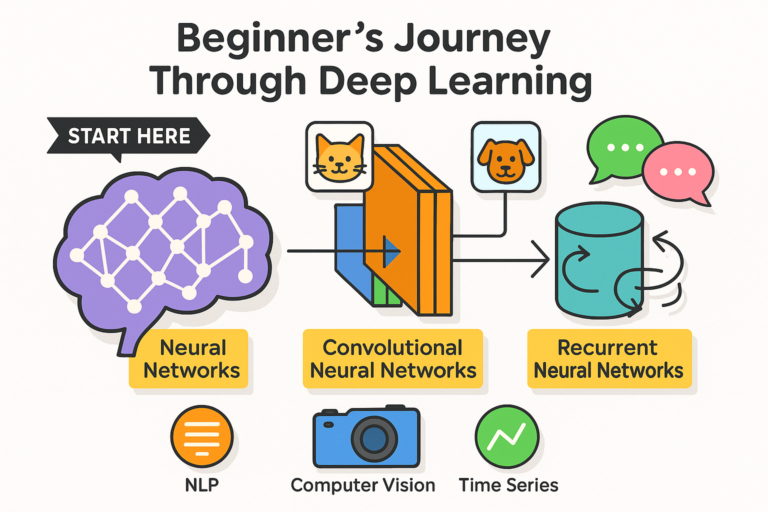

Ready to build more? Check out my next tutorial: “Building a Neural Network for Handwritten Digit Recognition using TensorFlow” (30-minute project perfect for understanding deep learning basics!)

📚 Resources

- Dataset: Kaggle – Campus Recruitment

- Google Colab: colab.research.google.com

- Scikit-learn Docs: scikit-learn.org

- Full Code: Download complete notebook

💬 Questions?

Drop your questions in the comments! I respond to every query.

Found this helpful? Share it with your classmates and give it a ⭐ on GitHub!

Written by [Your Name] | Aspiring Data Scientist | Building ML projects one step at a time 🚀

Tags: #MachineLearning #Python #DataScience #StudentProjects #BeginnerFriendly #AI #PlacementPrediction #ScikitLearn #CollegeProjects